While a lot of software for creating and managing scale comes out of supercomputing centers, hyperscalers, and the largest public cloud builders, there is still plenty of innovation being done by people who need to tackle scale outside of these upper echelon organizations. Two of them are Mitchell Hashimoto and Armon Dadgar, the co-founders of HashiCorp, and they have spent more than a decade building what is turning out to be the likely commercial alternative to the Kubernetes stack – which also supports Kubernetes if you really want to do that, too.

Like many open source projects that have made the leap to commercial success – and we are not saying that there are many of those – the first project in the Hashi Stack, called Vagrant, was a personal project of Hashimoto that created a kind of consistent configuration wrapper around application software that made it easier to package and update. Eventually Engine Yard – remember that platform cloud alternative to Red Hat’s original OpenShift and VMware’s Cloud Foundry? – sponsored Vagrant, which originally ran on Oracle’s VirtualBox hypervisor but which was expanded to include VMware’s ESXi, Red Hat’s KVM, and Microsoft’s Hyper-V hypervisors as well as the custom Xen hypervisor used by Amazon Web Services.

Hashimoto and Dadgar both got their bachelor’s degrees in computer science from the University of Washington and they also worked together at Kiip, which is a mobile ad tech and data platform provider based in San Francisco and which has Coca-Cola, Kellogg’s, Proctor & Gamble, McDonald’s, and Johnson & Johnson as its marquee customers. The Kiip ad engine was built in Python, Ruby, Bash, and Puppet, and when it was first turned on in 2010 (when Vagrant was a side project for Hashimoto), it could process a measly 1 query per second at 200 milliseconds of average latency, which is right at the impatience limit of the human attention span. And when they founded HashiCorp two years later, that Kiip system they left in the hands of their former employer was revved up to 2,000 queries per second at a 20 millisecond average response time. That’s a 2,000X improvement in throughput and a 10X improvement in latency, which is not too shabby.

Which is why the ambitions of the two HashiCorp co-founders were not absurd when they explicitly set out to build a modular software toolbox that could be the kernel of a true software platform, inspired by Unix, not Linux. (We will get to that in a moment.) Well, maybe a little absurd. But so is co-founding a new IT publication in 2015 to take on the upper echelon of computing architecture. . . .

The Hashi Stack now has ten core components, and here they are in order of release:

That’s a pretty steady beat of tool additions and a fairly complete stack. And HashiCorp is not trying to do everything, as Dadgar explains to The Next Platform, but rather do the core things well that others have not and then integrate with the other good tools that are needed to make a true and complete software platform.

Timothy Prickett Morgan: Right off the bat, we have not done a good job covering the rise of HashiCorp, and particularly the Terraform provisioning tool, so we owe you an apology. But for what it is worth, we have been paying attention. The stack that you have built is unique and is as complete as anything else anybody has put together. It is clearly now a platform in its own right.

My spider sense is going off and sooner or later somebody big is going to want to take control of Terraform. I am surprised it hasn’t happened already, to be honest. Doesn’t someone want to counterbalance Red Hat’s OpenShift and build a big business?

Armon Dadgar: I think we want to build a big business. [Laughter]

TPM: Cisco Systems is a possible fit, and they have a partnership with you. Hewlett Packard and Dell don’t want to be in the software business, which shows they don’t understand the modern IT business at some level. Microsoft could be a good fit. But we want a reprise of the OpenStack-Mesos-Kubernetes showdown. So maybe the VMware stack versus the IBM Red Hat OpenShift stack versus the Cisco Hashi stack.

Anyway, is Terraform the de facto standard for provisioning infrastructure in the datacenter today?

Armon Dadgar: I think that is a “Yes” at this point, but it is more than that.

When we started, we wanted to build a full portfolio that covers all of these pieces. I think we were intentional about laying out all the components. In the first few years of our life, and really from 2013 through 2016, we just kept expanding until we got to the stack as it’s known today. After that, we really shifted gears, and were super-focused on making this thing the de facto standard and then building a commercial business on top of that. We really didn’t focus on commercialization up until 2016 in any meaningful way.

From there, we had a three-pronged strategy, which has got us where we are today. One was relentless open source evangelism. We have a huge developer relations team. Mitchell and I still do a ton of developer evangelism ourselves, and it’s important to build developer love. I think the second piece was engaging all of our tech partners and the ecosystem around about building Terraform integrations. Now we have well over one hundred technology partners, and Terraform has over a thousand integrations through that. And the third prong was when we did start focusing commercially in 2016, we targeted the Global 2000 and we started winning the lighthouse accounts, like knocking over a big bank.

TPM: You can’t say “knock over a big bank.” It means you have robbed it!

Armon Dadgar: Well, they willingly gave us the money.

TPM: Whatever they gave you, it is pocket change they can cover by raising our bank fees.

Armon Dadgar: JP Morgan Chase is a great example. They gave us their innovation award last year because they’ve standardized how they provision with Terraform. And then they tell all their vendors, such as vendors like Cisco and NetApp and VMware, to build Terraform integrations.

We have been driven by the bottom-up groundswell of the community, followed by the ecosystem lean in to build out a network effect and then we knocked over a bunch of these Global 2000 customers.

TPM: Where are you at today? Give us some metrics about where you are the hockey stick? Are you in that weird place where every time you turn around, there’s like 2X more people working at the company?

Armon Dadgar: Pretty much. We had around 800 employees last year and that is now pushing up to 1,400 employees. I lose track myself. I think we will end the year at close to 2,000 people depending on how quickly we can keep hiring. We are well north of 1,000 paying enterprise customers, and we have close to 250 of the Global 2000. So we are past the early startup phase that we were in 2016.

TPM: This is where any business becomes interesting to me. I like things when they’re first starting and there is the concept and the ambition. It’s fun writing about projects as they emerge and then turn into companies, but then it turns into a blizzard of point releases and we let some time pass before we take a look again on what is happening and how the market is receiving whatever technology they have created.

Armon Dadgar: In that middle phase, you don’t know if they’re going to sink or swim. I’d like to think we’re past the sink or swim.

TPM: We spent a lot of time with CoreOS when we started The Next Platform because we thought that looked important, and the same for Mesos and OpenStack and few other evolving Kubernetes stacks.

I think it is safe to say that HashiCorp is swimming. I mean, you’re not walking on water yet, but, you know, VMware is not going to be able to do that much longer. VMware can persist for a long time, because of the vast 300,000-strong customer base and its long history of using vSphere. But in the long run, VMware prices have to come down to compete with better and better container platforms.

Armon Dadgar: I think of CA, which proves you can be around for a long time way after you are irrelevant. Decline can be very slow, gradual.

TPM: And weird. How in the hell did Computer Associates end up being acquired by Broadcom?

Armon Dadgar: A merger of two Titanics [Laughter]

TPM: Let’s not go there. [Laughter]

So what do you do now? Just keep doing what you’re already doing? You are growing like crazy, you have raised a fair amount of money. . . .

Armon Dadgar: Our recent funding was our Series E financing in March 2020 for $175 million. In total, we have raised $349.2 million.

TPM: When I start seeing Series F and Series G, that makes me pause except in special circumstances. Series E is normal. Are you trying to go public? You know, there’s probably a SPAC or two that’s desperate for you. . . .

Armon Dadgar: [Laughter] Our finance team is having to hit delete a lot, going through these things. But seriously, Dave McJannet, our CEO, has talked about going public. We’ve always been of the mind that our opportunity is so big that we want to build a standalone, independent business.

There’s definitely something to control and total ownership of your destiny – these are very valuable things.

TPM: Well, the VC guys probably want to cash out on a high note.

Armon Dadgar: That always becomes the counterbalance. At some point, they want their liquidity. So it’s always a careful balance. Going public helps with employee retention as well over time.

TPM: That is, if someone doesn’t try to make you an offer you can’t refuse, like EMC did to VMware way back when. Google? Probably not. Microsoft? Maybe. They are acquisitive.

Armon Dadgar: I think it’s worth talking about the cloud relationships in that in that respect. The value that HashiCorp brings is that we are that neutral Switzerland. We don’t have a cloud affiliation. We don’t sell you a cloud. I always describe our relationship with the clouds like this: they sell power, we sell power lines. And I think from the customer perspective, that’s valuable because they know they are going to have a relationship with all of them and they don’t want to be deeply wedded to CloudFormation or whatever because then they have no real leverage with the public clouds.

So I think HashiCorp being owned by a hyperscaler would break that. All of a sudden, we would not really be neutral. It’s almost what happened with Red Hat post the IBM acquisition. . . .

TPM: And what almost happened with VMware when Dell got its hands on it.

Armon Dadgar: Yes, exactly. But with that market, people were so far down the VMware road that it didn’t matter that Dell owned them.

TPM: Are you the true and only Switzerland at this point when it comes to platforms? I had a hope for Mesos and OpenStack and was waiting for a Kubernetes stack to emerge. I thought somehow these might glue together in an interesting way, but they didn’t Borg well, so to speak.

Armon Dadgar: [Laughter] I think Red Hat may have had the best shot pre-IBM because they still weren’t affiliated with a cloud and they had CoreOS and its Kubernetes implementation. But since they have been part of IBM, they’ve got the Blue Wash effect, and I think that customers view Red Hat like they’re not really neutral.

TPM: With the Terraform 1.0 release, are you down to fit and finish and polish? Is this thing ready for prime time, ready for enterprise? That’s what 1.0 normally means.

Armon Dadgar: HashiCorp has been the most historically conservative vendor of what we call 1.0. And I think we tease ourselves about this because like most companies IPO before we consider a product 1.0. Look at the scope of Terraform. It doesn’t even make sense – we have well over 100 million downloads, a thousand provider integrations, and well over a thousand enterprise customers, and only now do we call this a 1.0 product? The bar that we set is very, very high.

TPM: I’ve never heard of it. It’s almost legacy software at this point. This is like your 4.0 release.

Armon Dadgar: We joke in the community that we were off by a decimal place and this is really release 10.0. [Laughter]

Look at all of our products. None of them have done it in less than five years. Our view is this: Until the products are broadly deployed with production hardening time, it should not be a release 1.0. Some of these projects we see come out of the Linux Foundation, it’s like the first code commit and the last code commit were five minutes ago and the project is 6.0 release. How can it be at 6.0? There’s no user running it in production and you’re calling this thing a 6.0? Give me a break.

TPM: How complicated is this stack? Is it as complex as OpenStack, as big as OpenStack. As you know, OpenStack had everything in there but a blender and the kitchen sink. . . .

Armon Dadgar: [Laughter] Please don’t associate us with OpenStack. . . .

TPM: OK, OK, I’m trying to get a comparison. So let’s use a Linux or Windows operating system, which has tens of millions of lines of code depending on how you count it and cut it.

Armon Dadgar: The Hashi stack is certainly millions of lines of code.

What is more important is what we call the Tao of HashiCorp, our design ethos. And I think a key piece of that design ethos was like the Unix philosophy: We want each tool to be simple, focused on a single thing, but then we make them composable. We could build the Hashi Platform, which might be a monolithic, OpenStack kind of play, but we want to be super-conscious to do the exact opposite.

Each of these tools will do one thing and do it really well – and then compose with the others. So if I look at Terraform. it’s still very, very focused on just the provisioning. Give me an infrastructure as code definition of what you want it to look like, Terraform will figure out how to get there – and it will do the provisioning and lifecycle engine, but it stops there. It’s not going to do image management. It doesn’t do patching. It doesn’t do app deploy. If you want an image management tool, we have Packer. You want service discovery and network automation, we have Consul. You have app deploy? Use Kubernetes or Nomad. We didn’t want the OpenStack effect that this is monolithic, big bang code base – all in or all out. These are very narrowly focused, do one thing and do it elegantly rather than try to boil the ocean.

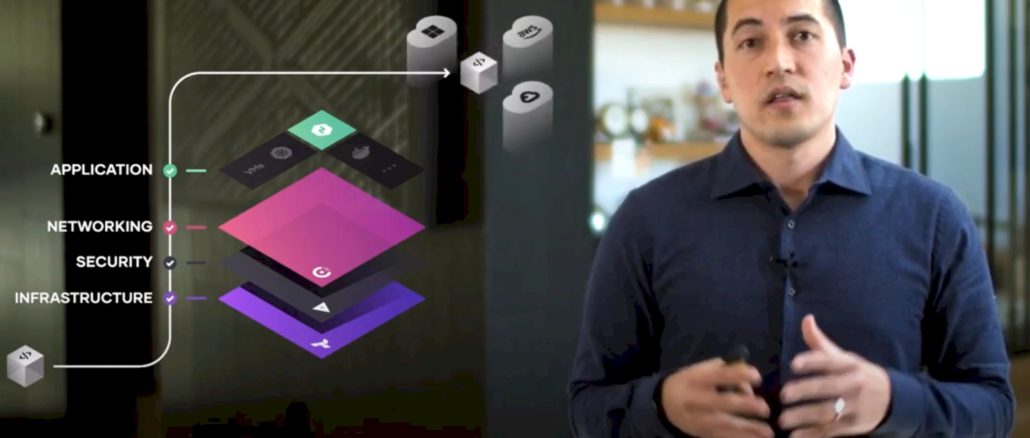

There are four layers that we care about: provisioning, security, networking, and app deploy. And there’s layers that we think other people do really well, like observability and telemetry. Go use DataDog or Splunk or whatever. And we’re also not really in preproduction world. So it’s a great let’s compose a very tightly with GitHub and GitLab and Artifactory and the CI/CD vendors.

TPM: You’re not messing in the hypervisor. You’re not messing in firmware management for servers. You’re not messing in configuration of switches or any of that stuff.

Armon Dadgar: Or even configuration management. We integrate tightly with Ansible, Chef, and Puppet. Use Terraform for the provisioning, and then let’s integrate tightly with these. We deeply integrate with Kubernetes across the stack, but we have our Nomad alternative, which is our container and application scheduler.

TPM: Is Nomad better than Kubernetes?

Armon Dadgar: I would say, “Yes,” but obviously I am biased.

TPM: I assume you believe that or you wouldn’t bother. So then what is it that you’re doing with Nomad that is better than Kubernetes?

Armon Dadgar: For me, it comes down to three really simple things. One is just the elegance of experience. Kubernetes is OpenStack 2.0. It’s just as complicated. It’s just as vendor controlled. It’s just as foundation led.

TPM: It’s Seven of Nine. . . . Or Six of One and A Half A Dozen Of Another.

Armon Dadgar: It’s a horse designed by committee.

To me, I also find it so disingenuous the way they talk about Kubernetes. It’s the successor to Borg and Google took everything they learned from it. If that’s true, then why does everything still run on Borg inside of Google?

TPM: Google must have kept some of the good stuff.

Armon Dadgar: Let’s ignore the usability of Kubernetes, which is a mess. Let’s talk about its actual operational scalability. It’s also a joke. Borg runs on 10 million nodes, Kubernetes falls over if you have a few hundred. And so in what sense did Google learn from Borg when Kubernetes only scales to 1/1000th of what Borg does?

Only a few weeks ago, we published a two million container benchmark, which we did in partnership with Amazon Web Services. We had 100,000 CPU cores and 10,000 nodes and we deployed two million containers across it. In 2017, we did a million container version of this.

Nomad actually operates at size and scale, it’s actually based off of the Borg and Omega papers from Google. Ours is actually a true implementation of Borg as opposed to an implementation of Borg. And the user experience is just much simpler, more elegant.

TPM: What is the nerd equivalent of a mic drop? A laptop drop test? Whatever it is, that was one of them.