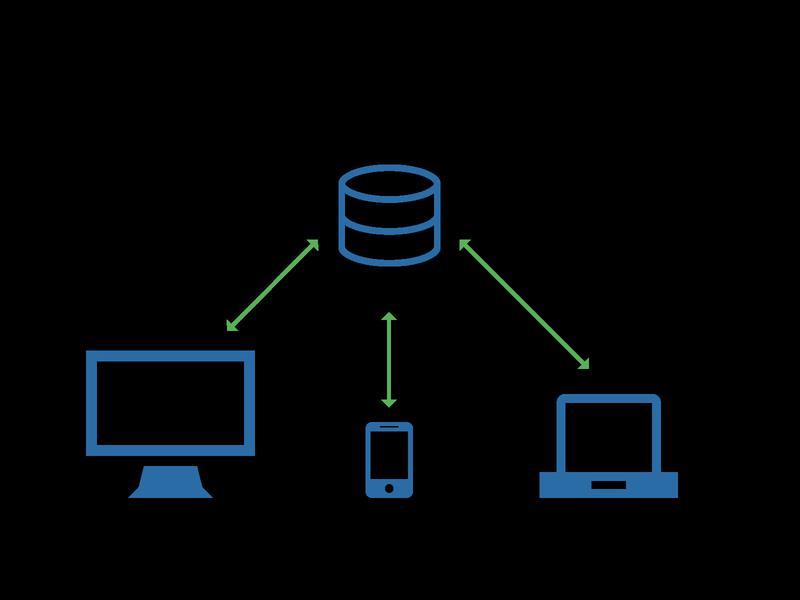

Client-server is a relationship in which one program (the client) requests a service or resource from another program (the server). At the turn of the last century, the label client-server was used to distinguish distributed computing by personal computers (PCs) from the monolithic, centralized computing model used by mainframes.

Today, computer transactions in which the server fulfills a request made by a client are very common and the client-server model has become one of the central ideas of network computing. In this context, the client establishes a connection to the server over a local area network (LAN) or wide-area network (WAN), such as the Internet. Once the server has fulfilled the client's request, the connection is terminated. Because multiple client programs share the services of the same server program, a special server called a daemon may be activated just to await client requests.

In the early days of the internet, the majority of network traffic was between remote clients requesting web content and the data center servers that provided the content. This traffic pattern is referred to as north-south traffic. Today, with the maturity of virtualization and cloud computing, network traffic is more likely to be server-to-server, a pattern known as east-west traffic. This, in turn, has changed administrator focus from a centralized security model designed to protect the network perimeter to a decentralized security model that focuses more on controlling individual user access to services and data, and auditing their behavior to ensure compliance with policies and regulations.

An important advantage of the client-server model is that its centralized architecture helps make it easier to protect data with access controls that are enforced by security policies. Also, it doesn't matter if the clients and the server are built on the same operating system because data is transferred through client-server protocols that are platform-agnostic.

An important disadvantage of the client-server model is that if too many clients simultaneously request data from the server, it may get overloaded. In addition to causing network congestion, too many requests may result in a denial of service.

Clients typically communicate with servers by using the TCP/IP protocol suite. TCP is a connection-oriented protocol, which means a connection is established and maintained until the application programs at each end have finished exchanging messages. It determines how to break application data into packets that networks can deliver, sends packets to and accepts packets from the network layer, manages flow control and handles retransmission of dropped or garbled packets as well as acknowledgement of all packets that arrive.In the Open Systems Interconnection (OSI) communication model, TCP covers parts of Layer 4, the Transport Layer, and parts of Layer 5, the Session Layer.

In contrast, IP is a connectionless protocol, which means that there is no continuing connection between the endpoints that are communicating. Each packet that travels through the Internet is treated as an independent unit of data without any relation to any other unit of data. (The reason the packets do get put in the right order is because of TCP.) In the Open Systems Interconnection (OSI) communication model, IP is in layer 3, the Networking Layer.

Other program relationship models included master/slave and peer-to-peer (P2P). In the P2P model, each node in the network can function as both a server and a client. In the master/slave model, one device or process (known as the master) controls one or more other devices or processes (known as slaves). Once the master/slave relationship is established, the direction of control is always one way, from the master to the slave.

PREV: [HowTo] VirtualBox - Installation, USB, Shared folders ...

NEXT: How to Create an Nginx Virtual Host (AKA Server Blocks ...